Another paper my friend referred me to is this anecdote about the dog and the frisbee. The main idea is as follows: Catching a frisbee is a very hard problem. If one wanted to model it with physics, chances are the model would get very complex very quickly. Similarly, good luck solving it with AI/deep learning, you will need loads of data and very complex models.

However, there is a very simple heuristic that works most of the time: "run at a speed so that the angle of gaze to the frisbee remains roughly constant". This simple heuristic agrees with how dogs and humans seem to catch frisbees in real life. The morale of the story is:

sometimes the best way to solve complex problems is to keep them simple

In my experience, this anecdote applies to most problems in data science. Do you need a recommender system? Try the simple people who have bought this also bought that heuristic first before thinking about machine learning. If you need to classify or predict things, try logistic regression with simple features. This annoys the hell out of data scientists who are fresh out of a PhD in machine learning, but linear regression actually does the job in 90% of the cases. Implementing a linear classifier in production is super-easy and very scaleable.

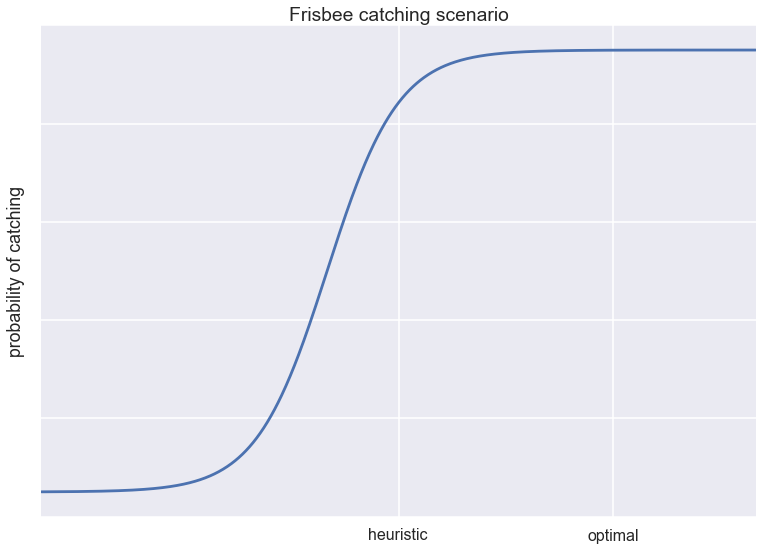

I think about these problems in terms of utility functions, as is shown in the figure above. Let's say the utility or value of any solution is described as a sigmoidal function of some kind of raw performance metric. In the frisbee catching scenario, the utility would be the probability of catching the frisbee. In frisbee catching-like data science problems, a heuristic already achieves pretty good results, and so any complex machine learning solution would be trying to make a big impact in the saturated regime of the utility function.

The hard problems

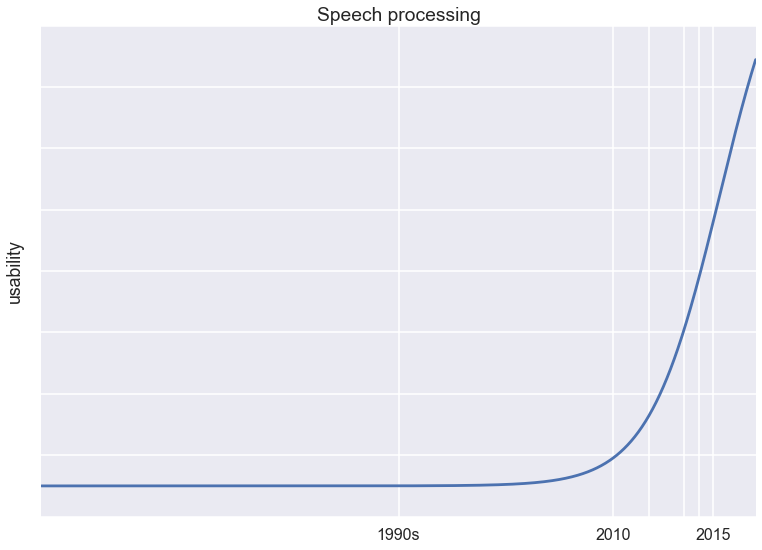

Of course, there are hard problems where we could not get any simple heuristics working yet, where the only answer today seems to be machine learning. An example of this would be speech recognition.

I think of the overall utility of a speech recognition system as a steep sigmoidal function of the underlying accuracy. Below a threshold accuracy of say 95%, the system is practically useless. Around 95% things start getting useful. Once this barrier is passed, the systems feel near perfect and people start adopting it. This is illustrated in the figure below (this is just an illustrative figure, the numbers don't mean anything). We have made a lot of progress recently, but breakthroughs in machine learning techniques were needed to get us here. Once we are past the steep part of the curve, improving existing systems will start to have diminishing returns.